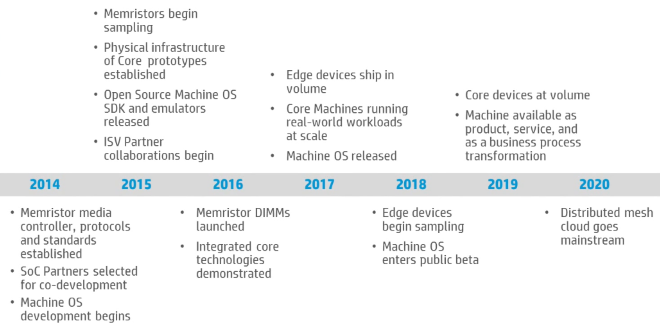

HP Labs announced the first working prototype of The Machine will be coming in 2016, while an instruction-level emulator and an SDK will be available next year.

[youtube https://www.youtube.com/watch?v=QPQ1AheNro8&w=560&h=315]

Development of The Machine and its software environment proceed according to plan, according to HP's CTO and head of HP Labs Martin Fink.

As of today, The Machine is expected to feature a single pool of mass memory outside of CPU registers and cache, which will consist of memristor banks being currently developed by HP itself, and to have rack interconnections based on fiber by default, which thanks to HP Labs' advancements in silicon photonics could reach bandwidths of up to 20Tbps.

It is currently being developed with an eye towards the analysis of massive data sets, since the Universal Memory pool available to it will be able to make data transactions to CPU faster by orders of magnitude, compared to the mass storage on the market today, and its CPU consists of unspecified "special-purpose cores" that allow greater performance than today's CPUs while requiring power in the order of single digit Watts.

The pictured Universal Memory module is expected to hold 4TB of data at launch, and after further development a module of the same size will contain up to 100TB, according to Fink.

For the software side, there are multiple operating systems being developed at HP Labs. The first, and most important, is a completely new OS called Copper, built from the ground up with The Machine's special memory structure in mind. There are also customized versions of Linux and Android coming out before Copper, to gain traction as fast as possible and help software developers get used to the new memory hierarchy while using a familiar software environment.

Will HP be able to deliver on their promises? Will we see a successful paradigm shift in data analysis? Will it be another Itanic? Let us know in the comments.